ACRES - Software - Altair SimLab

Overview and Scope

This article describes how to solve Altair SimLab problems on ACRES. The problem must be set up on a graphical workstation beforehand. Only the numerical solution is performed on ACRES. In essence, the process consists of exporting a solver input file for the model, choosing the correct solver to use (and the corresponding SLURM submit script template), adjusting the SLURM submit script as needed, then finally submitting the job and retrieving the results. It i assumed that the reader is already familiar with the basics of using ACRES, and working on a Linux command line.

Some solutions to potential errors are given at the end of this article. Please check if the solution is there for any problems you encounter.

Exporting A Solver Input File

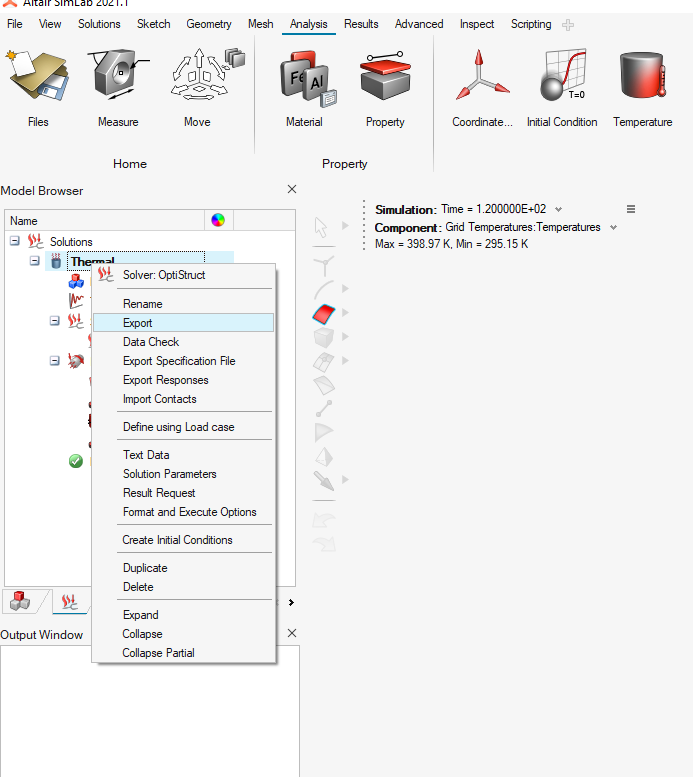

To export a solver input file, right click on your "solution" in the model tree → export.

You should now see a solver input file at the file location you specified

Log in to ACRES. Create a directory in which you would like to run the job, and copy the solver input file from your local computer to that directory. (If unfamiliar with this, please see: How to Transfer Files between Acres and Your Local Computers )

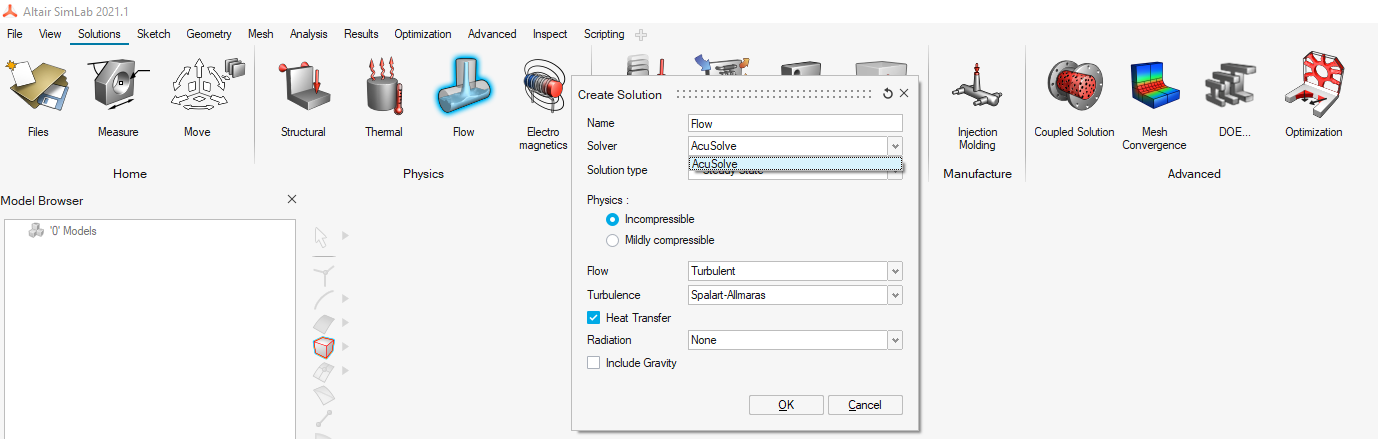

Choosing the Appropriate Solver

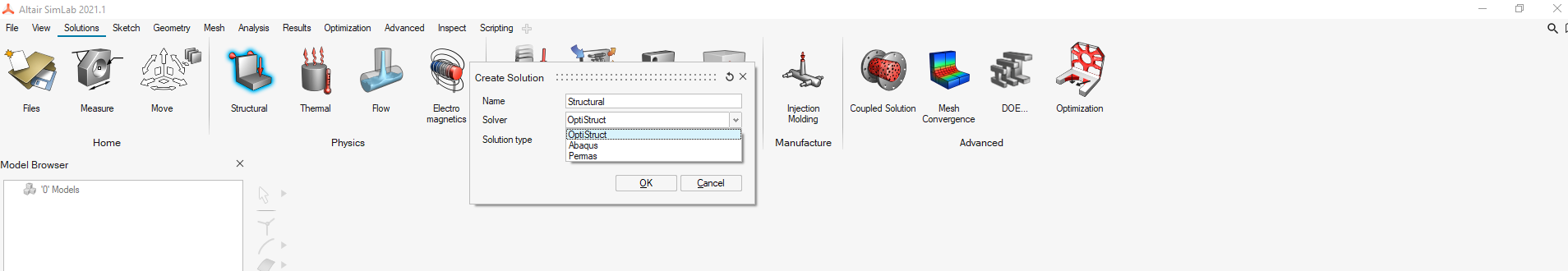

When creating a "solution" of the model, a solver is chosen from a drop-down list. The executable to run in your SLURM script will have a similar name to that solver. For example, to run a structural job, you can use "Optistruct", which is can be used as the "optistruct" command in the SLURM submit script on ACRES, once the "Altair/SimLab/2021.1" module has been loaded. (see optistruct-submit.sh as an example)

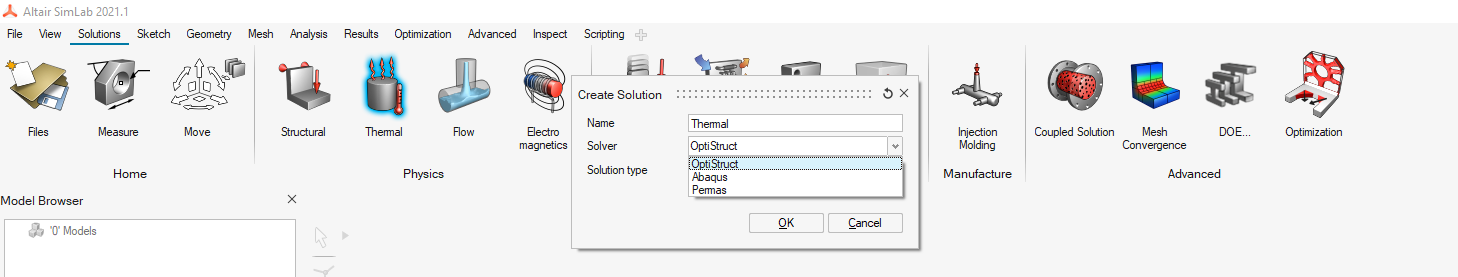

The structural solvers are also the Thermal solvers:

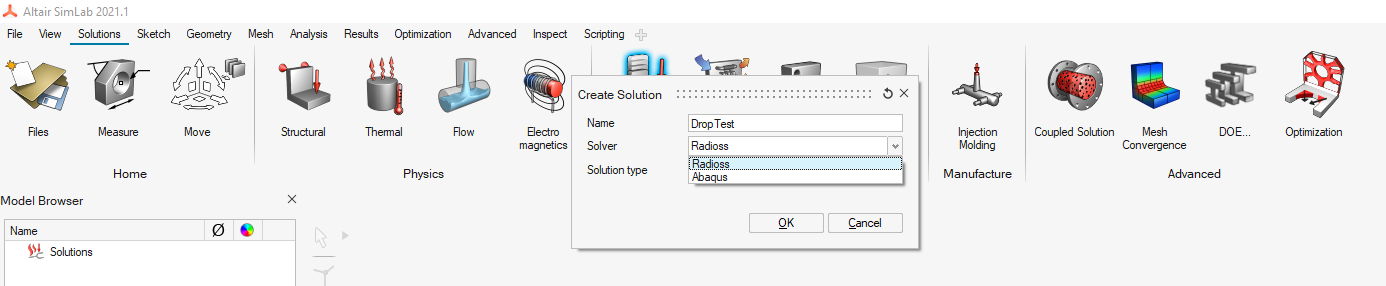

Notice that the default solver for the "Drop Test" is "Radioss". This corresponds to the "radioss" command on ACRES.

The general purpose fluid flow solver is "AcuSolve", which, corresponds to the "acuRun" command on ACRES.

If you ever need to find a particular Altair Simlab executable, look through the directories in:

/mnt/data/altair/SimLab/altair/SimLab/bin/linux64/solvers

Adjusting the SLURM Submit Script

Example SLURM submit scripts are available for optistruct, radioss, and acusolve:

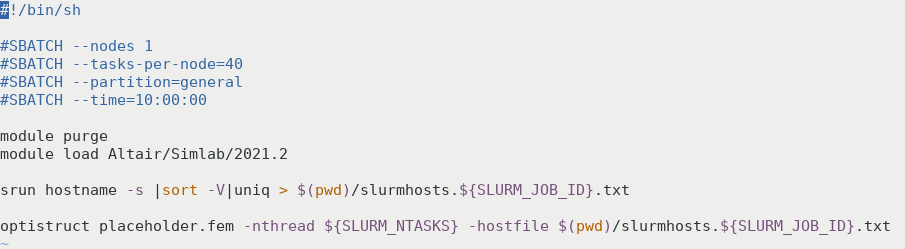

The optistruct-submit-noMPI.sh script is for analyses that do not support MPI (such as linear heat transfer analysis), the other script, optistruct-submit.sh, is for all other optistruct analysis. Trying to use the "optistruct-submit.sh" script for an analysis that does not support MPI will give a "DDM is not supported..." error. The principal differences between the "optistrct-submit.sh" and "optistruct-submit-noMPI.sh" files are as follows:

- In the "noMPI" script, the command line option -nt or -nthreads is used to define the number of threads, as opposed to -np, which defines the number of processes.

- The hostname file for the "noMPI" script is piped through "uniq", so that the hostfile only contains one host name, instead of repeating the hostname for each task. This ensures that only one process is spawned on that host.

After choosing the appropriate SLURM script template, and copy it to the same directory where the solver input file is on ACRES.

You need to edit the slurm submit script so that the solver acts on your particular *.fem input file, by replacing the name of the placeholder *fem file in the optistruct command, as shown below. Also adjust the number of nodes and tasks appropriately. No more than one node should be used with the optistruct-submit-noMPI.sh script.

The Acusolve submit script is slightly different in that the name of your input file must be listed without the file extension after the "-pb" option ("-pb" stands for "problem"). If your input file is called "placeholder.inp", then just "placeholder" should be listed after "-pb" in the run command:

#!/bin/sh

#SBATCH --nodes 1

#SBATCH --tasks-per-node=40

#SBATCH --partition=general

#SBATCH --time=10:00:00

module purge

module load Altair/Simlab/2021.2

acuRun -pb placeholder -mp mpi -np 80Submitting the Job

Once the necessary input files, and the SLURM submit script are in the desired run directory on ACRES. Submit the job by running:

sbatch <submit script>

where should be replaced by the name of your SLURM submit script, such as optistruct-submit.sh or optistruct-submit-noMPI.sh or acusolve-submit.sh

Notes On Checking Your Job

Ordinarily, to check if your SLURM job is running at full capacity, you can "ssh" into a node on which your job is running (e.g. run the command: ssh compute-11-1), and check if it is running with the "top" command. Depending on how you run Altair solvers, what you see there may not be what you'd expect.

Optistruct with MPI: For an MPI job with the "optistruct-submit.sh" script, you should see as many processes running on the host as specified in the "tasks-per-node" option, and they should all be running around 100% CPU load, as expected.

Optistruct without MPI: However, if you ran the "optistruct-submit-noMPI.sh" script you should see only one process running at 100% X "tasks-per-node" , (e.g. 400% for 4 tasks). This is because the tasks are not running as separate MPI processes, but as separate threads of a single process.

Acusolve: Acusolve features a hybrid MPI / threading capability, so it automatically runs on as many threads as it can on a single host, while only spawning separate processes for running across multiple hosts, or if the user further limits the number of threads per process with the "-nt" option.

Radioss: If you are using Radioss, note that Radioss only spawns one process with the name "radioss" as the master process, but spawns other processes with names like "e_2021.2_linux6" which do the work.

The "radioss" master process will likely not make the list given by "top", as it does not induce much CPU load.

Retrieving the Results

To retrieve the results,

- Copy the output files back to your local workstation.

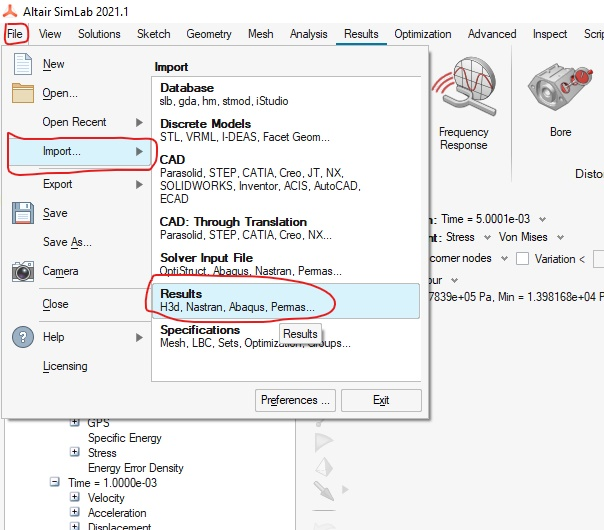

- Lauch SimLab and click "File" → "Import" → "Results". (as shown below)

- Select the appropriately named output file, depending on the solver you used.

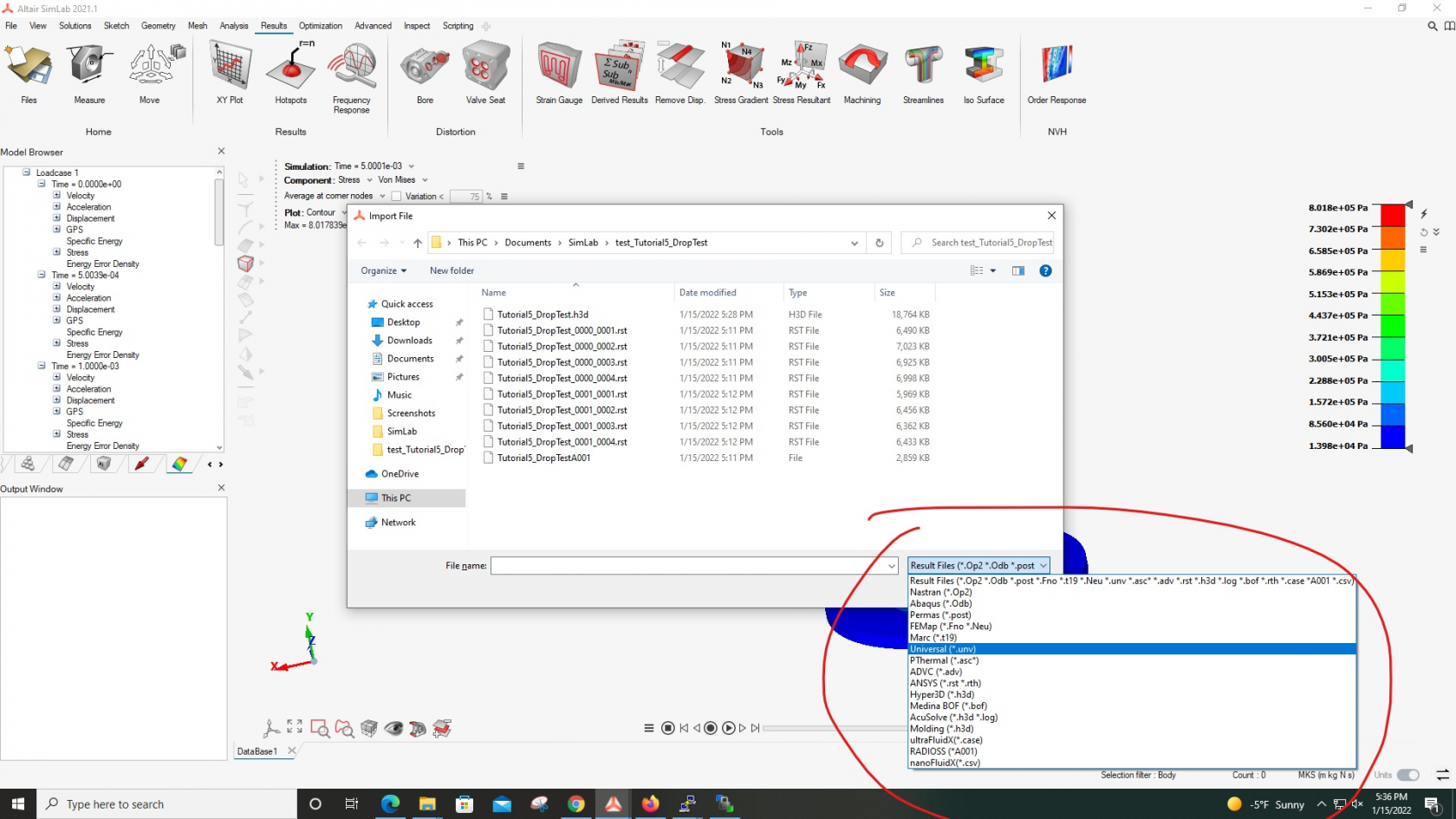

Below is a list of some of the solvers and the corresponding results output file that should be selected.

Optistruct - *.h3d

Radioss - A001

AcuSolve - *.Log

You may also get a hit as to what type of results file corresponds to which solver by looking at the file-type drop-down list in the file explorer. (Note that *.h3d is the file type for the optistruct command, even though Optistruct is not explicitly listed.)

Notice

To load the Acusolve results, the ACUSIM.DIR directory must be present with the *.Log file. You may also convert the Acusolve results to *.h3d format, using "acuTrans -pb -to h3d"

Note on Simlab Version

Currently, SimLab version 2021.2 is needed to view the results from a multi-process (MPI) acusolve run. An update is planned to upgrade the SimLab version available through AppsAnywhere from 2021.1 to 2021.2. However, if acusolve is run on a single node, it will automatically use parallel threading instead of MPI, and results from this kind of run may be loaded into Simlab version 2021.1.

Errors and Solutions

If you encounter a "DDM not supported... " error, try using a single-node script such as optistruct-submit-noMPI.sh. If you are not using optistruct, create such a script by:

- adding "|uniq" to the hostfile creation command

- setting the SLURM number of nodes to 1

- changing "-np" to "-nt" or "-nthreads" in the executable command in your current script.